Machine learning applications often necessitate running multiple instances of the same model for handling large volumes of data or high request rates. Secondly, Docker excels in facilitating scalability. This consistency eliminates the infamous "it works on my machine" problem, making it a prime choice for deploying ML models, which are particularly sensitive to changes in their operating environment. Firstly, the consistent environment provided by Docker containers ensures minimal discrepancies between the development, testing, and production stages. Why Dockerize Machine Learning Applications? In the context of machine learning applications, Docker offers numerous benefits, each contributing significantly to operational efficiency and model performance. These features have the potential to significantly streamline the deployment of ML models and reduce associated operational complexities.

Docker also simplifies the scaling process, allowing multiple instances of an ML model to be easily deployed across numerous servers. Docker's containerized nature ensures consistency between ML models' training and serving environments, mitigating the risk of encountering discrepancies due to environmental differences. When it comes to machine learning applications, Docker brings forth several advantages. This facilitates the process of building, testing, and deploying applications quickly and reliably, making Docker a crucial tool for software development and operations (DevOps). A Docker container encapsulates everything an application needs to run (including libraries, system tools, code, and runtime) and ensures that it behaves uniformly across different computing environments. The fundamental underpinnings of Docker revolve around the concept of 'containerization.' This virtualization approach allows software and its entire runtime environment to be packaged into a standardized unit for software development. What Is Docker? As a platform, Docker automates software application deployment, scaling, and operation within lightweight, portable containers. Furthermore, the integration of Docker in a Continuous Integration/Continuous Deployment (CI/CD) pipeline is presented, culminating with the conclusion and best practices for efficient ML model deployment using Docker.

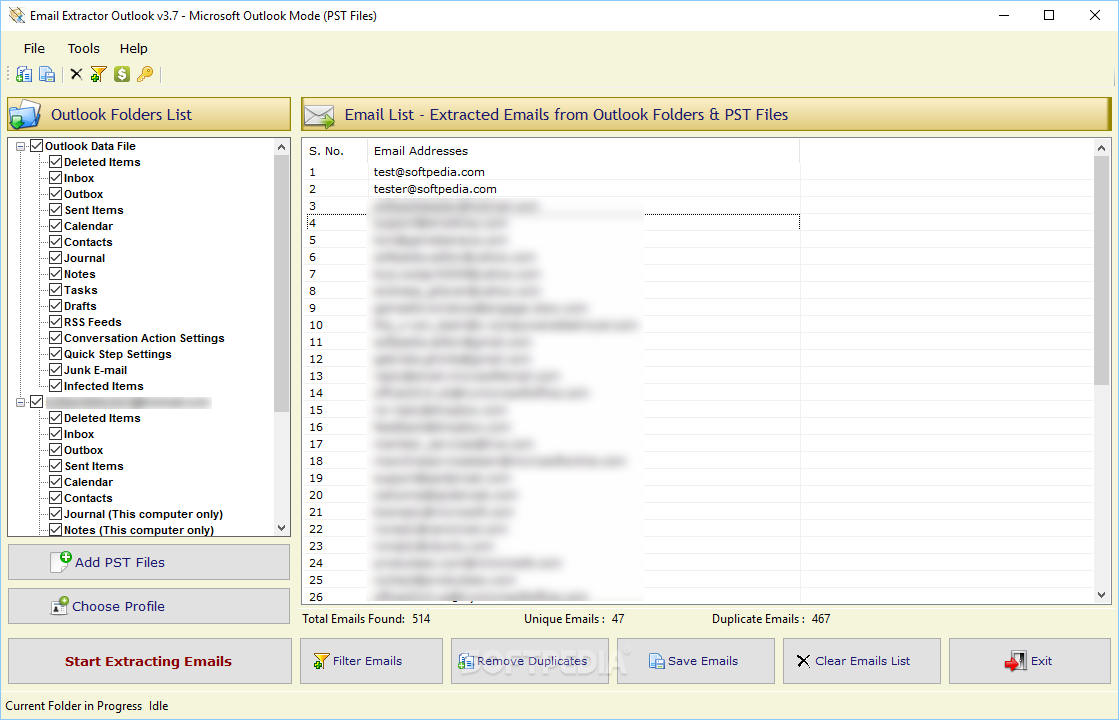

The following sections present an in-depth exploration of Docker, its role in ML model deployment, and a practical demonstration of deploying an ML model using Docker, from the creation of a Dockerfile to the scaling of the model with Docker Swarm, all exemplified by relevant code snippets. Docker containers offer numerous benefits, including consistency across development and production environments, ease of scaling, and simplicity in deployment. The proposed methodology encapsulates the ML models and their environment into a standardized Docker container unit. This article proposes a technique using Docker, an open-source platform designed to automate application deployment, scaling, and management, as a solution to these challenges. Traditional approaches often need help operationalizing ML models due to factors like discrepancies between training and serving environments or the difficulties in scaling up. Machine learning (ML) has seen explosive growth in recent years, leading to increased demand for robust, scalable, and efficient deployment methods.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed